Asking good questions sits at the heart of learning and evaluation. It helps us explore what is working, why it is working, and for whom. Evaluations provide an opportunity to understand an initiative’s overall progress, including specific aspects of its implementation and influence. A small number of Key Evaluation Questions (KEQs) help provide focus. These are not interview or survey questions; they are high-level questions that draw together evidence from multiple sources to assess results.

Using a Theory of change approach alongside a project logic model helps establish measures of success that trace the projects development and impact over time. These measures must be shaped by key project actors – including funders, project participants, and community members – to ensure relevance. A useful starting framework is the OEDC/DAC evaluation criteria, which focus on relevance, coherence, effectiveness, efficiency, impact, and sustainability.

Drawing on these criteria, possible KEQs include:

- Is the initiative delivering on outputs and outcomes as planned? (efficiency and effectiveness)

- Are the (or were the) activities and their delivery methods been effective? Are there aspects that could have been done differently? (process effectiveness)

- Is the wider project story being told? What range of outcomes (intended and unintended) has the project contributed to – taking account of each of social, economic, environmental and cultural considerations (relevance and impact)

- How has the initiative influenced the appropriate stakeholder community, and what capacities has it built? (relevance and impact)

- Has the initiative being delivered on budget? (efficiency)

- Is the project impacting positively on key groups and issues that have been identified as important in project design – particularly gender, indigenous, youth and environment? (relevance and impact)

- Is there evidence that the initiative is likely to grow – scaling up and out – beyond the project life? (sustainability)

Types of evaluation and their purpose

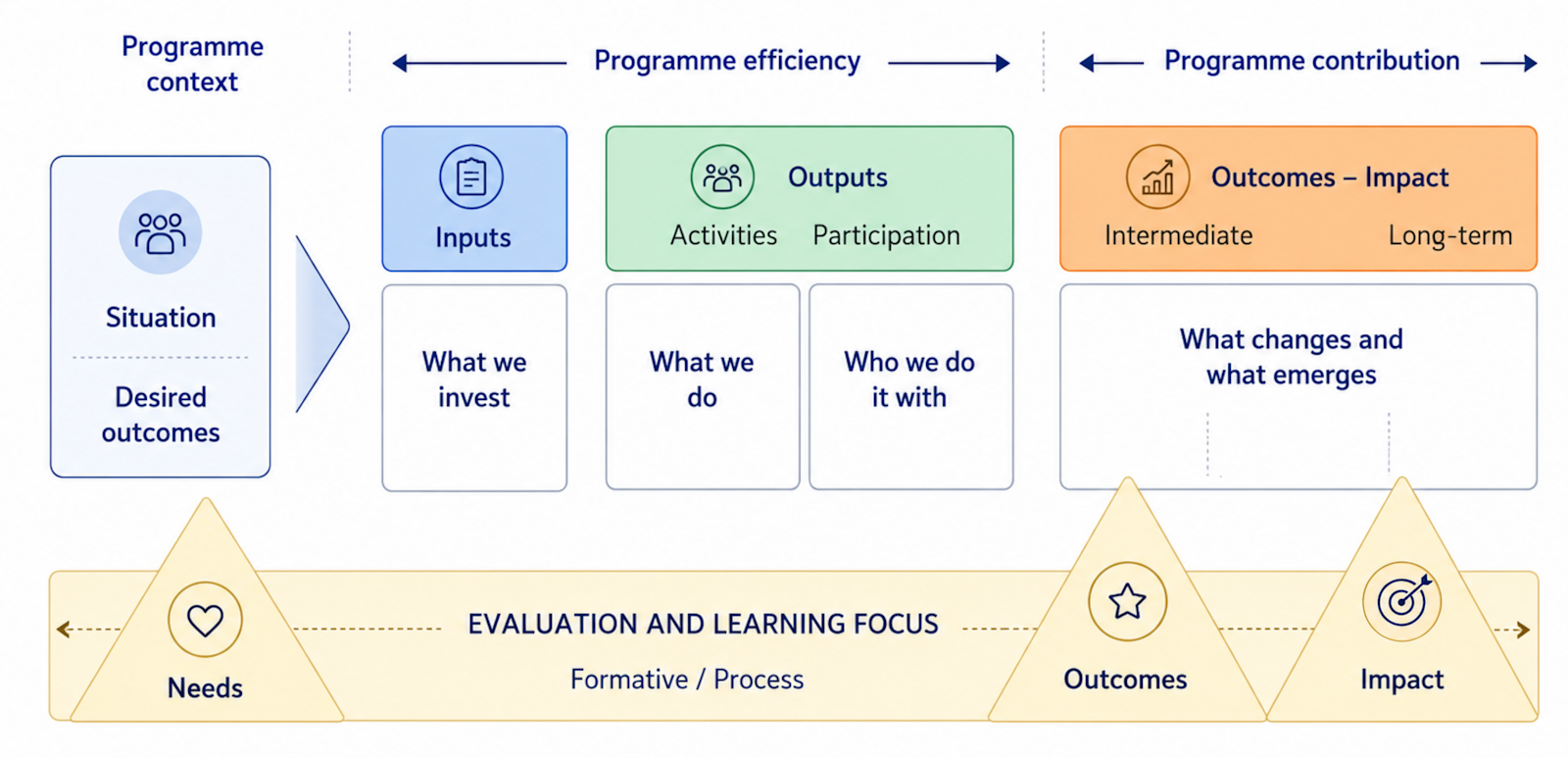

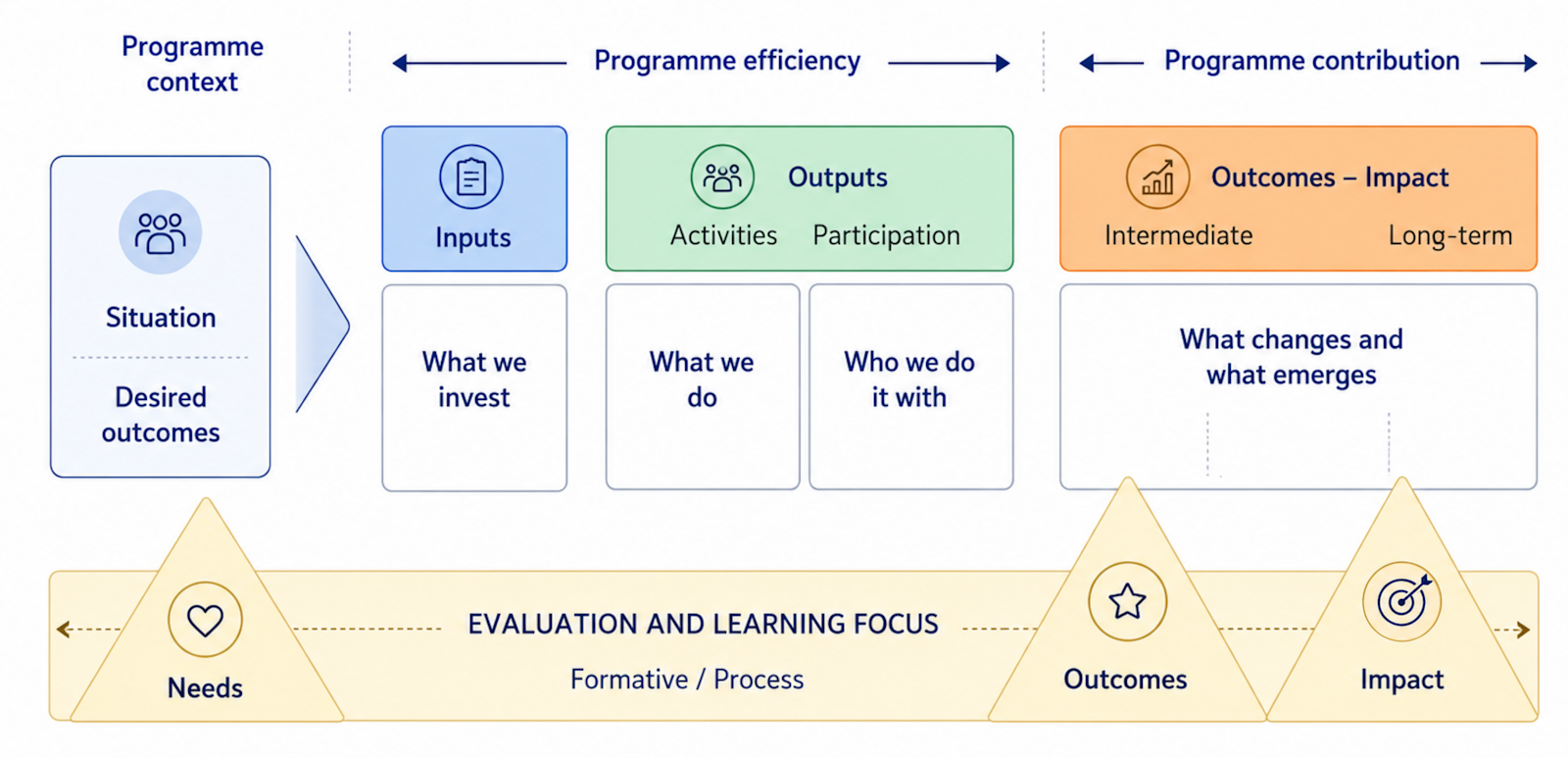

Evaluation types are defined by the questions they aim to answer. A logic model helps outline a project’s development, expected outcomes and success measures. Other frameworks can guide decisions about evaluation intensity and levels of stakeholder engagement. The diagram below shows how different evaluation approaches can be used to explore different parts of a project or change initiative. In practice, most initiatives draw on a combination of methods to understand different aspects of progress.

This version of the logic model is adapted from University of Wisconsin–Extension materials developed by Ellen Taylor-Powell and Ellen Henert (2008). Their original framework has been widely used in evaluation and extension teaching, and has influenced many later logic model resources. This Learning for Sustainability adaptation draws on that earlier logic model tradition, while reshaping the structure for environmental, collaborative and place-based work.

It also reframes the lower band as an evaluation and learning focus, showing how different evaluative questions align with programme context, efficiency, contribution, outcomes and impact. In doing so, it highlights the movement from what is done, to what changes, and then to what emerges over time.

Needs Assessments: These evaluations explore the scale and nature of an issue, identifying who may benefit from a programme. They help design new initiatives or justify the continuation or revision of existing ones.

Monitoring for accountability: Ongoing monitoring tracks whether a programme is being implemented as intended. It highlights issues that need attention early, supporting timely adjustments.

Formative evaluations: Typically carried out during design or early implementation, formative evaluation helps refine and improve initiatives. It may include process studies or early outcome work, with findings used to strengthen programme delivery.

Process evaluations: Process evaluations document how an initiative is delivered. They explore questions such as: What services were provided, and to whom? What resources were used? What challenges emerged, and how were they addressed? This information helps explain how outcomes were achieved and supports replication.

Impact or Outcome Evaluations:

These evaluations assess the degree of change produced by a programme. They focus on what happened for participants and systems, and how much of a difference the initiative made. They are important for understanding whether outcomes were achieved and for learning how an initiative contributes to wider change.

Summative Evaluations: Conducted at the end of a programme, summative evaluations synthesise findings across the initiative. They support accountability, provide insight into effectiveness and influence, and help inform future investment decisions.

Useful resources on evaluation planning

A practical guide for engaging stakeholders in developing evaluation questions

Hallie Preskill and Nathalie Jones outline a five-step process for involving stakeholders in developing evaluation questions. The guide includes worksheets and a case study, emphasising the value of seeking diverse perspectives.

Specify the Key Evaluation Questions

This BetterEvaluation resource explains how agreeing on a small set of KEQs helps guide data collection, analysis and reporting. It encourages keeping the number of KEQs manageable and aligning them with the evaluation’s purpose.

Key evaluation questions: A stakeholder-driven approach

This presentation illustrates the idea of KEQs and highlights why they should be shaped with stakeholders. The emphasis is on matching methods to purpose rather than letting methods drive the evaluation.

[* Image: Adobe Stock | Wavebreak]